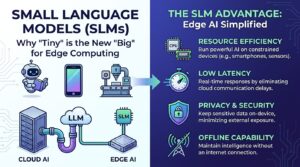

In recent years, the world of Artificial Intelligence has been dominated by massive models with billions of parameters. While these large language models (LLMs) have shown incredible capabilities, they come with significant challenges—high computational costs, latency, and dependency on cloud infrastructure.

This is where Small Language Models (SLMs) are changing the game.

SLMs prove that bigger isn’t always better. In fact, when it comes to edge computing, smaller models are often faster, cheaper, and more practical.

What Are Small Language Models (SLMs)?

Small Language Models are compact AI models designed to perform language-related tasks with fewer parameters compared to large models.

Key characteristics:

- Lightweight architecture

- Faster inference time

- Lower memory usage

- Can run on local devices (phones, IoT, edge devices)

Unlike LLMs that require powerful GPUs and cloud servers, SLMs can operate efficiently on edge hardware.

What Is Edge Computing?

Edge computing refers to processing data closer to where it is generated, rather than sending it to centralized cloud servers.

Examples:

- Smartphones

- Smart cameras

- IoT devices

- Autonomous vehicles

- Wearables

This approach reduces latency and improves real-time decision-making.

Why “Tiny” Is the New “Big”

1. ⚡ Faster Performance

SLMs deliver results almost instantly because they run locally. There’s no need to send data to the cloud and wait for a response.

👉 Perfect for:

- Voice assistants

- Real-time translation

- Smart devices

2. 💰 Cost Efficiency

Running large models in the cloud is expensive. SLMs reduce:

- Server costs

- API costs

- Infrastructure dependency

This makes AI more accessible for startups and small businesses.

3. 🔒 Better Privacy

Since SLMs process data locally:

- Sensitive data stays on the device

- Reduced risk of data breaches

👉 Ideal for:

- Healthcare apps

- Personal assistants

- Finance applications

4. 📶 Works Offline

SLMs can function without internet connectivity, making them highly reliable in low-network areas.

5. 🔋 Energy Efficient

Smaller models consume less power, which is critical for:

- Mobile devices

- IoT systems

- Battery-powered gadgets

Real-World Use Cases

📱 Smartphones

- On-device AI assistants

- Keyboard predictions

- Smart replies

🚗 Autonomous Vehicles

- Real-time object detection

- Decision-making at the edge

🏥 Healthcare

- Patient monitoring devices

- Local diagnosis tools

🏭 Industrial IoT

- Predictive maintenance

- Equipment monitoring

SLMs vs LLMs: Quick Comparison

| Feature | SLMs | LLMs |

| Size | Small | Very Large |

| Speed | Fast | Slower |

| Cost | Low | High |

| Deployment | Edge devices | Cloud-based |

| Privacy | High | Moderate |

| Power Consumption | Low | High |

Challenges of SLMs

While SLMs are powerful, they do have limitations:

- Lower accuracy compared to large models in complex tasks

- Limited knowledge base

- Requires optimization and fine-tuning

- Trade-off between size and performance

The Future of SLMs

The future of AI is moving toward efficient intelligence, not just bigger models.

Emerging trends include:

- Model compression techniques

- Knowledge distillation

- Hybrid models (SLM + Cloud support)

- On-device personalization

Big tech companies are already investing heavily in SLMs for edge AI solutions.

Conclusion

Small Language Models are redefining how AI is deployed and used. In a world where speed, privacy, and efficiency matter more than ever, SLMs prove that “tiny” truly is the new “big”. As edge computing continues to grow, SLMs will become a core part of everyday technology—from smartphones to smart cities. Grab your opportunity with GSInfotekh in our upcoming training sessions in your career-building program.