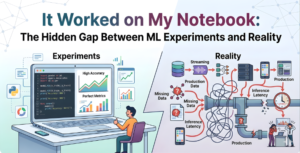

Machine Learning has transformed industries, enabling intelligent systems in healthcare, finance, retail, and automation. However, many developers and data scientists face a frustrating problem: models that perform perfectly during development fail when deployed in real-world environments. This challenge is known as the ML experiments and reality gap, and it is one of the biggest obstacles in successful AI implementation.

Organizations using platforms from OpenAI, Google, and Microsoft invest heavily in ensuring their models work reliably beyond research environments. Understanding this gap is essential for anyone building production-ready ML systems.

What is the ML Experiments and Reality Gap?

The ML experiments and reality gap refers to the difference between how a machine learning model performs in a controlled development environment (such as a notebook) and how it performs in real-world production systems.

In notebooks, data is clean, structured, and static. Developers have full control over the environment. But in reality, systems face noisy data, unpredictable inputs, changing patterns, and infrastructure limitations. This difference creates the ML experiments and reality gap, causing models to fail or perform poorly after deployment.

Why Does This Gap Exist?

Several technical and operational factors contribute to the ML experiments and reality gap.

1. Data Drift and Changing Patterns

Real-world data constantly evolves. Customer behavior, market trends, and external factors change over time. When models encounter new patterns they weren’t trained on, the ML experiments and reality gap becomes visible through reduced accuracy.

2. Environment Differences

Notebooks run on local machines or controlled environments. Production systems run on servers, cloud platforms, or distributed systems powered by companies like NVIDIA. Differences in hardware, dependencies, and configurations widen the ML experiments and reality gap.

3. Scalability Challenges

A model tested on small datasets may struggle with millions of real-time requests. Latency, memory usage, and performance constraints contribute significantly to the ML experiments and reality gap.

4. Lack of Monitoring

In experiments, performance is actively observed. In production, lack of monitoring means failures go unnoticed, further increasing the ML experiments and reality gap.

Real-World Example

A recommendation model may show 95% accuracy in a notebook using historical data. But after deployment, users behave differently, new products are introduced, and seasonal trends change. Without adaptation, the model’s accuracy drops, demonstrating the ML experiments and reality gap in action.

How to Bridge the ML Experiments and Reality Gap

Bridging the ML experiments and reality gap is essential for organizations that want to move from successful prototypes to reliable, production-ready machine learning systems. Many models perform exceptionally well in controlled environments but fail to deliver consistent results in real-world scenarios. To overcome this challenge, teams must adopt strong engineering practices, continuous monitoring, and operational discipline.

1. Adopt MLOps Practices

One of the most effective ways to reduce the ML experiments and reality gap is by implementing MLOps practices. MLOps combines machine learning with DevOps principles to streamline the entire lifecycle of a model—from development to deployment and monitoring. It enables automation, version control, continuous integration, and continuous deployment (CI/CD) for machine learning systems. By using MLOps, teams can ensure that models are deployed consistently, tested thoroughly, and maintained efficiently. This approach significantly reduces errors and improves the reliability of models in production environments.

2. Use Production-Like Testing Environments

Testing models only in development environments is a major reason for the ML experiments and reality gap. To avoid unexpected failures, models should be tested using production-like environments that closely mimic real-world conditions. This includes using real or realistic datasets, simulating live traffic, and evaluating performance under different workloads. Production-like testing helps identify issues related to scalability, latency, and data inconsistencies before deployment, ensuring smoother transitions from experimentation to real-world usage.

3. Monitor Models Continuously

Continuous monitoring is critical to ensure long-term model performance. Even after successful deployment, models can experience performance degradation due to data drift, changing user behavior, or system updates. Monitoring key metrics such as accuracy, latency, error rates, and prediction quality helps teams detect problems early. By proactively monitoring models, organizations can quickly address issues and prevent failures, thereby minimizing the ML experiments and reality gap over time.

4. Automate Retraining Pipelines

Data in real-world environments is constantly evolving, which can cause models to become outdated. Automated retraining pipelines ensure that models are regularly updated with fresh data, allowing them to adapt to new patterns and maintain accuracy. Automation also reduces manual effort and ensures faster updates, making models more reliable in dynamic environments. This continuous improvement process plays a key role in closing the ML experiments and reality gap.

5. Ensure Reproducibility

Reproducibility is a fundamental requirement for successful machine learning deployment. Teams should use version control systems to track datasets, code, model parameters, and configurations. Proper documentation and experiment tracking tools help ensure that models can be recreated and validated consistently. Reproducibility eliminates confusion between development and production versions, ensuring stability and reducing the ML experiments and reality gap.

Best Practices for Production-Ready ML

- Use containerization tools like Docker

- Implement CI/CD pipelines for ML

- Track experiments using ML tracking tools

- Monitor model performance continuously

- Automate deployment and retraining

These practices help organizations reduce the ML experiments and reality gap and build reliable AI systems.

Conclusion

The journey from notebook to production is more complex than building an accurate model. The ML experiments and reality gap highlights the importance of scalability, monitoring, infrastructure, and operational discipline. By adopting MLOps, testing thoroughly, and monitoring continuously, organizations can ensure their machine learning models perform successfully in real-world environments. Closing the ML experiments and reality gap is essential for delivering reliable, scalable, and impactful AI solutions. Grab your opportunity with GSInfotekh in our upcoming training sessions in your career-building program.