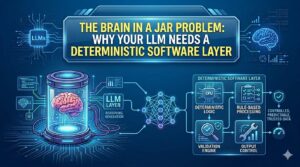

The Brain in a Jar Problem: Why Your LLM Needs a Deterministic Software Layer

Large Language Models (LLMs) like those developed by OpenAI are powerful—but they come with a critical limitation often described as the Brain in a Jar Problem.

The Brain in a Jar Problem refers to a system that can “think” (generate responses) but cannot reliably act, verify, or control outcomes in the real world. While LLMs are excellent at generating text, they lack deterministic execution, making them unpredictable for production systems.

Understanding the Brain in a Jar Problem is essential if you’re building AI-driven applications that need consistency, accuracy, and control.

What is the Brain in a Jar Problem?

Imagine a brain floating in a jar. It can think, reason, and respond—but it has no direct connection to reality.

That’s exactly what happens when you rely solely on an LLM.

The Brain in a Jar Problem occurs because:

- LLMs generate probabilistic outputs (not guaranteed correct)

- They cannot enforce rules strictly

- They lack persistent memory and system control

This means your AI may sound confident—but still be wrong.

Why LLMs Alone Are Not Enough

While LLMs are impressive, the Brain in a Jar Problem highlights several limitations:

1. Non-Deterministic Outputs

The same input can produce different outputs. This inconsistency makes it risky for critical systems.

2. No Built-in Validation

LLMs don’t verify facts—they generate likely responses.

3. Lack of Execution Control

They cannot reliably perform structured operations like:

- Database updates

- Transactions

- API workflows

4. Hallucinations

One of the biggest symptoms of the Brain in a Jar Problem is hallucination—confident but incorrect answers.

What is a Deterministic Software Layer?

A deterministic software layer is the structured, rule-based system that surrounds your LLM.

It ensures:

- Predictable outputs

- Controlled workflows

- Verified results

Instead of letting the LLM “decide everything,” you use it as a component, not the entire system.

How the Deterministic Layer Solves the Problem

To overcome the Brain in a Jar Problem, you need to combine intelligence with control.

🔹 1. Input Validation

The deterministic layer filters and formats inputs before sending them to the LLM.

🔹 2. Output Constraints

You enforce structured outputs (JSON, templates, schemas).

🔹 3. Rule-Based Logic

Critical decisions are handled by code—not AI guesses.

🔹 4. Tool Integration

The system connects the LLM with:

- APIs

- Databases

- External services

🔹 5. Error Handling

The deterministic layer catches failures and retries or corrects them.

Real-World Example

Without deterministic layer:

User asks: “Book a meeting tomorrow at 10 AM”

LLM response: “Your meeting is scheduled!” ❌ (No real action taken)

With deterministic layer:

- Parse intent

- Validate date/time

- Call calendar API

- Confirm booking

✅ Actual outcome achieved

This is how you eliminate the Brain in a Jar Problem in production systems.

Best Practices to Avoid the Brain in a Jar Problem

To build reliable AI systems:

- Keep logic in deterministic code

- Design clear workflows

- Add validation layers

- Log and monitor outputs

The more you depend purely on AI, the worse the Brain in a Jar Problem becomes.

Conclusion

The Brain in a Jar Problem reminds us that intelligence alone is not enough—execution matters. LLMs are powerful “brains,” but without a deterministic software layer, they remain disconnected from real-world reliability.

The future of AI systems lies in hybrid architecture:

👉 LLM for intelligence

👉 Deterministic layer for control

Solve the Brain in a Jar Problem, and you move from experimental AI to production-ready systems.